|

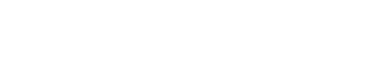

HDMI 2.1 with 8K resolution is no longer on the horizon, it is here! And creating headaches all over the world. This document is intended to help any integrator use the Fox and Hound kit to set up a distributed video system so that it is 8K compliant. The front page of the document acts as a “key” to the system diagram on the reverse side. The end goal of this document is to ensure that your video systems are compliant and ready for 8K whether the components and content are in current use today or will be used in the future. The numbers below are keyed to corresponding numbered elements in the diagram above. In this diagram, the generator is depicted with a green highlighted outline, and the analyzer is depicted with a purple highlighted outline. Also in the diagram, the generator is emulating a source outputting a test pattern. The analyzer is emulating the next device in the signal path, which may be the matrix switcher, the HDMI extension kit, or the display.

0 Comments

Has it become time for residential (also commercial) integrators, who haven’t given as much as a nod to 8K, to consider addressing installation infrastructure and video distribution equipment with 8K capabilities during sales discussions or at least as suggested options contained in design proposals? When at the cusp of any significant transition that has come to pass in this industry, many clasp the status quo for as long as possible, while others wholeheartedly embrace change full-on. From component to DVI/HDMI digital, through HD resolution into UHD, obstacles presenting installation challenges were numerous enough that campfire tales can be told until the end of time. Most were conquered, though a select legacy few remain to aggravate dealers and end users in 2023. This newest foray into higher resolution is perhaps viewed by integrators with a misplaced perspective. Upgrading end users from 4K system architecture to implement NextGen capabilities outright does not make you synonymous with Jack of beanstalk lore, trading client dollars for 8K magic beans. Upgrading elevates end user clients from 4K, 18Gbps bandwidth into 4K/8K and 40Gbps, the bandwidth cap for most current 8K devices using the best performing chipset (though HDMI 2.1 allows for 48Gbps total bandwidth). Let’s revisit HDMI 2.1’s technologies to examine what is required to properly equip an 8K system, and for what is forfeited by sidestepping implementation at this time. HDMI 2.1 converted the original HDMI red, green and blue data channels and repurposed the TMDS clock channel (HDMI 2.1 uses an embedded clock derived from the transmitted data) into four, Fixed Rate Link (FRL) “lanes” with an aggregate bandwidth capability of 48Gbps, governed by Link Training. Link training establishes the lane rate, number of lanes used, equalization required for the pathway a signal will take, and determines whether fall back to TMDS is required when signals 18Gbps or lower are attempting to pass and the Link Training State fails to initiate FRL. The Greater Importance Of Bit Depth Moving Forward8K HDMI 2.1 displays typically have at least two inputs which are up to 48Gbps capable (manufacturer-dependent). These displays use 10-bit panels (1,024 gradations) that are able to process video information with finer gradient steps in pixel light output and a greater palette of color shading than legacy 8-bit panels (256 gradations). 10-bit panels almost completely eliminate banding, a visible artifact occurring as a demarcation point where gradual shades of the same color transition in shading. Banding is described by many different terms, such as posterization and false contouring, but think of it as the sculpted outlined shape of a seashell. In a video scene with an expansive blue sky, subtle shading variances are perceptible near the horizon where intensity of sunlight is lessened. We see these transitions as uniform and unaltered in the natural world, but in video a careful observer may detect a gradient demarcation point where it would otherwise be a smooth amendment in luminance. With 8-bit panels, random, distinct, radiant patterns are readily perceptible, appearing as the previously described seashell outline. 10-bit panels parse artifacts like these, if present, to a diminished point where casual observers find them indiscernible. Note, however, the above refers to chroma upsampling artifacts and not those motion-induced. Hollywood color grading monitors remain at 10-bit, to which they are perfectly suited for 1,000 nits of luminance with HDR10 (the “10” referring to bit depth). 12-bit panels are reasonably far off in the future, as they would likely be designed to produce 10,000 nits (required for the pixel light output of a 12-bit, pure white signal). A Sony BVM-HX310, which is a 10-bit, 1,000 nit broadcast monitor, warmed up for fifteen minutes prior to an HDR calibration produces a noticeable and significant amount of heat output. Designing monitors (or panels) for safe duty-cycle required operation at 12-bit / 10,000 nits, defies current technology, aside from research-related prototypes. Dolby’s Pulsar studio monitor remains at the top, producing 4,000 nits and is internally liquid cooled. Some Neo-QLED sets produce 4,000 nit peaks, though a constant output of 1,500 to 2,000 nits is possible. For serious viewing, calibration is necessary to prevent clipping the signal. HDMI 2.1 Beyond Just Bandwidth ExtensionHDMI 2.1 introduced a suite of feature enhancements in addition to higher bandwidth. Included are support for Source Based Tone Mapping (SBTM), dynamic metadata HDR codecs, Auto Low Latency Mode (ALLM) for lag elimination along with Quick Frame Transport (QFT), and on-the-fly Variable Refresh Rate compensation (VRR). VRR and Quick Media Switching (QMS) combine to be profoundly useful for streaming devices such as Apple TV when it is appropriately set to Match Content and Match Frame Rate. Previously, a content change that also changed frame rate on the same source (for example, from a 24fps movie to a 60fps live broadcast) often induced HDMI 2.0-based systems into re-clocking and re-syncing, causing black screen delays plus EDID management issues with mixed display brands in distributed systems. Often overlooked in the myriad of HDMI 2.1 improvements is lip-sync compensation. The display exchanges data with sources to align video with audio. A useful but low profile HDMI 2.1 benefit (HDMI 2.1a, Amendment 1) which will become more prominent as the 8K era unfolds, is HDMI Cable Power. A legacy HDMI cable is able to draw up to 55 milliamperes from legacy HDMI 2.X chipsets, whereas HDMI Cable Power enables up to a 300 mA draw at 5V from HDMI 2.1 devices (cable and the source component must both support the HDMI Cable Power feature). While this voltage increase may help extend passive copper-based HDMI cable lengths for more than 3 meters, you may be tempting fate. 48Gbps active optical cables will become the benchmark HDMI cable type for 8K systems when lengths longer than in-rack connections are necessary, and HDMI Cable Power will assist meeting the performance requirements with longer runs. What should be common practice for integrators in this HDMI day and age is putting HDMI distant-run cabling inside a future-ready conduit, for easy replacement when the next generation of HDMI enhancement beckons a change. Just When You Thought It Was Going To Be Okay...HDMI 2.1 improvements will serve to make for a trouble-free system platform. But there is one mega-caveat: The HDMI Licensing Administrator (HDMI.org/ HDMI LA), appointed by the HDMI Forum (the involved, founding manufacturers) for HDMI Version 2.1 licensing, has created a huge chasm of compliance loopholes. Instead of simplifying nomenclature for integrators and consumers to arrive at a complete understanding of what features a particular product has implemented, the polar opposite has come about. So much so, that you cannot rely simply on a device or a display touting HDMI 2.1 credentials and expect it to tick all HDMI 2.1 specification boxes. The HDMI LA deprecated HDMI 2.0 in 2017, stating that HDMI 2.0 features have become a subset of HDMI 2.1, provided the display is in support of one, yes – singularly, one HDMI 2.1 feature. Holy Jumpin’ - that’s a problem or three. What will be incumbent upon integrators is to verify if a product supports all the HDMI 2.1 features your system design or client’s use habits will require and take nothing for granted. End users make attempts, whenever possible, to be ahead of the consumer electronics curve, though occasionally in the past such temptation was unfairly rewarded with early adopters acquiring products that were not fully compatible when new formats were completely rolled out. Are you making certain design proposals accommodate those occasions when the client has already taken this leap? Clients who tend to make rogue purchases, extraneous to those you propose, will need to be provided with a features list to ensure what they intend to procure meets all the criteria your system design stipulates. If your design and deployment disciplines focus on making systems as identical to each other as practical for programming repeatability and installation familiarity, acquire a single SKU and test it completely for every feature expected to be used with all associated products it will be joining in the system, before committing to making it a “standard” item. If you discover a particular display does not conform with your design parameters, as Joel Silver, President and founder of the Imaging Science Foundation espouses: “Vote with your purchase orders.” Voting a non-compliant product out of your mix will eliminate installation issues and end-user aggravation. Old And New Tech StewOur Tech Support staff reports compatibility issues between HDMI 2.X pathways and HDMI 2.1-equipped devices is a frequent cause for support line calls. In many instances, integrators try to unnecessarily push too large of a signal down a wire, often eclipsing 18Gps. Common is the attempt to pass 4K/60fps, 4:4:4, HDR10+, uncompressed, from a source component, a signal which requires 20.05 Gbps. In an article for the AVPro Newsletter (see: https://www.avproedge.com/news/setting-source-device-output-in-distributed-video-and-av-over-ip-systems) I described that in distributed video systems, sending a signal simply containing the original information in the source and resisting additional chroma upsampling, will allow the signal to safely and consistently travel through properly configured HDMI 2.X installations. At the display end, all necessary upconversion will in nearly every circumstance, be better performed by the display. The article points out that select source components, such as the venerable Oppo 205 and Kaleidescape players are capable of perfect video processing, but they are best utilized directly-connected to the premium components in a system rather than serving as distributed sources. It’s not that a top tier component like Kaleidescape can’t or shouldn’t be a distributed source, more so, integrators try to send the largest signal down the longest, narrowest pipe, and as a result, a necessary performance reduction is the only means for trouble-free distribution, compromising signal fidelity at the system location where the best gear is located. A common example where the HDMI generation transition bottleneck might occur, is in a system where the display and the AVR are replaced with new HDMI 2.1 products, but an existing 18Gbps extension connecting kit or an HDMI/HDBaseT hybrid switcher for distribution remains in place, intending to be updated when 8K content is more prominent. Ambitious integrators always want to deliver the best performance for their clients, which naturally, is highly encouraged. What Tech Support finds with many calls is while a source is not 8K, HDMI 2.1 devices looking to verify up-to-date EDIDs encounter legacy HDMI 2.X and even HDMI 1.4 devices. As explained above, Link Training State checks are sometimes unable to establish FRL, instead defaulting to TMDS. HDMI 2.1 chipsets may have difficulty making a connection and HDCP denies display of the content. Many calls report Apple TV or Roku work as intended, but cable and satellite boxes will not, through the same HDMI switcher. More deeply steeped in mystery, testing methodology with a Murideo Fox and Hound kit shows the signal makes it as far as the display (sink) and the analyzer sees it when the signal path is tested, but the TV will not produce an image. Our innovative AVPro Edge engineers have often configured custom EDIDs to address and overcome kinetic complex issues, with Tech Support downloading these EDIDs remotely into our HDMI extenders and switchers, a testament to the AVPro Edge Tech Support and Engineering teams’ vow to never let you down in the field, plus design foresight during product planning and development for unique, flexible devices. But the solution is to replace all devices as a “system”. 8K Needs In The Here And NowAs has been pointed out, fairly, little 8K content or no source devices aside from game consoles exist, and nothing is peering over the far horizon suggesting this may change soon. Some 8K content exists on You Tube, accessed via TV apps, but are they really complete 8K signals or heavily color compressed? Amazon is streaming 8K-related content from The Lord of the Rings: The Rings of Power (though not the actual series) via Samsung TV Plus, available on 2020 and newer Neo QLED 8K TVs along with ‘The Wall’ 8K Micro LED TVs, in an attempt to prod interest in 8K. Keep in mind, while 8K demands bandwidth greater than 18Gbps, so does 4K/120Hz gaming. PlayStation 5 at 32Gbps and XBOX Series X at 40Gbps outputs require at least Ultra High Speed HDMI cables for direct-connect performance to a compatible display. But gaming households usually have multiple consoles and even a dedicated gaming computer, and not all HDMI 2.1 4K / 8K televisions or AVRs are equipped with an adequate amount of 40-48Gbps HDMI ports. AVPro Edge was first to market with an 8K HDMI switcher, the 8 X 8, AC-MX-88X (designed for wider distribution needs). The AVPro Edge AC-MX-42X solves the above scenario with 4, HDMI 2.1 capable inputs and the main output sending switched signals to the display, as a secondary output routes a downscaled 1080P signal to an AVR accompanied by uncompressed audio, maintaining high bitrate audio codecs such as Dolby Atmos and DTS:X. Next-gen consoles teased 8K, but game authoring isn’t near that level yet, and despite mutterings of a PS5 Pro or updated X Box Series X appearing in 2023 to delve more deeply into the 8K universe, they remain rumors. A Few Words On 8K Display Technology8K consumer televisions are no longer new entrants to the marketplace, rather they are now considered mainstream products. Some models are based on existing technology, such as OLED, while others are hybrid marriages of different aspects of video designs, not too different from Formula1 cars, and demand F1-level comparable monetary outlays. Cutting edge offerings are at staggeringly eye-watering prices, but slowly maturing designs have descended to become somewhat palatable, with entry-level models market-competitive alongside top-range 4K displays. OLED prices for 8K sizes above seventy-inches still remain somewhat stout, principally from manufacturing materials costs. An 8K OLED TV can require as many as 500 MILLION, expensive, substantially high quality transistors – with multiples necessary per sub-pixel supported by compensation circuitry. OLED is an emissive technology, not backlight-transmissive like LED-based LCD (OLED pixels produce the outputted light, backlit light is not driven through them). To reach consumer-acceptable levels in light output these transistors, located on the backplane and consolidated into large monolithic chipsets, are required to produce a fair amount of current. OLED manufacturing is a complicated process, with lower yields translating to increased retail pricing. Another approach to 8K is Mini LED. This technology features highly detailed image quality with near OLED black level attained by turning the LEDs off, as with OLED. With Mini LED, instead of addressable red, green, blue and white OLED pixels (unlike Sony’s recently retired broadcast monitors, which were true RGB OLEDs), multiple pixels are illuminated by one mini-LED. Whereas 8K OLED derives superior image fidelity from pixel density, Mini LED achieves higher light output from the LEDs being 1/40th the size of those regularly used with edge-lit displays, while packing 10 times more fire power into the backplane on premium sets. Since the LEDs do not correspond to a one-to-one relationship like OLED pixels, they do turn off when black in a scene is required, dramatically reducing traditional LED-LCD halo effects, though they are not completely eliminated. OLED still gets a hat tip from most for the ultimate in black level. Advances in panel technology are making inroads which may soon make 8K televisions commonplace. Inkjet printing techniques for quantum dot conversion layers have been developed to support 8K resolution for Quantum Dot OLED (QD-OLED). It doesn’t address the transistor-related backplane issue and associated expense to that, but it is the first step in paving the way for a mass production process to replace costly specialty assembly. 8K displays have superior image processors that, rare in the video world, have the potential to make the original 4K image look better than on a 4K native display. How? With four more times the pixel density, and four times as many pixels for illumination, an 8K display will render diagonal artifacts undetectable from any viewing distance, can be viewed from farther away than what the “experts” said was required (closer is fine, but not a necessity) to achieve the benefits from the increased resolution, while contrast plus dynamic range is enhanced in comparison to a same-sized 4K display. So, What Does This Mean For Today’s Integrators? As mentioned, there are those that toe-drag, frequently having the end-user escort them to the technology altar, while the prudent early adopters get that toe wet and then decide to take the plunge. 8K cables are an easy way to inch-in… you need HDMI cables regardless, and making Ultra High Speed cables part of your repertoire now should at least partially eliminate cables as a factor when troubleshooting on site. A Subset: Cable Lengths - Avoiding Short HDMI Cables While on the subject of HDMI cables, Tech Support would like this to be known: Despite physical proximity of connected devices, AVPro Edge and Murideo recommend devices be connected by HDMI cables no less than 2 meters. Exhaustive testing has confirmed that shorter lengths, while obviously transferring signal, affect handshake repeatability and long-term system operational consistency. Failures occur when data arrives at the next-in-line device, prior to Link Training making a proper equalization assignment, and the hierarchy of HDCP tests do not have time to complete authentication. A longer cable (ideally, 3 meters) provides the physical distance for electronic timing to propagate fully. Maintain this HDMI cable regimen between all connecting HDMI devices: Source components to switchers, switchers to AVRs, outputs to HDMI or HDBaseT transmitters and from extension receivers into displays. While these lengths may make it more difficult for a rack interior to be photo worthy or take a modicum of time to tidy up behind a display, they will greatly contribute to system stability. This will be of paramount importance with 8K due to higher bandwidth and the speed of signal transmission. Advocating The Changeover System infrastructure hardware is the logical follow-up to Ultra High Speed HDMI cabling. Integrators are as sensitive to budget stretching as end users however, the best time to introduce hardware replacement (switchers and extenders) is during the discussion of upgrading components and displays (including clean slate proposals). The reasons for the refresh are in context with the ambitions to upgrading the display/projector and the audio side of a system. All devices in a system contemporary to one another provide the best assurance for successful, trouble free system deployment and operation. 8K Ain’t Going Away 8K will not be going away. For far more than a decade, content acquisition in Hollywood has been at 5K & 6K, elevating in the past half-dozen years to 8K and even higher. Initially, it provided non-animated special effects more pixels, so guy wires, harnesses plus other movie magic trickery could be “digitally erased” with no compromise to image fidelity. Now, remastering is doing for movies what it did decades back for musical catalogs - giving the public not a different version of their favorites, rather an unchanged better version.

In Hollywood workflows, 8K masters will eventually lead today’s 4K hits (Top Gun: Maverick comes to mind) being re-released in the very near-future in their original 8K splendor to an even more-amazed audience. Consider a platform like Kaleidescape. While I have no information indicating anything of this sort may be forthcoming, what is to prevent them from developing an 8K player for 8K titles they secure for exclusive use in their store? It’s likely not even a whole-step away, given their relationship with the content creation community. Previously, I have written how Dolby’s ambitions parallel future specifications already established. Dolby doesn’t drag Hollywood around by the collar, but almost. Their roadmap includes 12K, 120fps, uncompressed color, and 10,000 nits. Those are the chapters coming, after 8K’s is fully written. |

Third Party Reviews & Articles

SIX-G Generator

Archives

July 2024

Categories |

|

|

© Copyright 2015-2023

Home Contact Us About Us Careers Warranty 2222 E 52nd Street North, Suite 101, Sioux Falls SD 57104 +1 605-330-8491 [email protected] |

RSS Feed

RSS Feed